Guides

Guides Migrating from using Excel

This article gives you some pointers on how to migrate your current Excel-based QA and testing process to Meliora Testlab.

-

Introduction

If you’ve been working in IT-development field you’ve probably been in a project which is managed by hand with spreadsheets, most likely Excel. We’ve all been there. In this article we go through some challenges on migrating your current process to a modern test management tool and give concrete advice on importing your data.

-

Typical spreadsheet based testing scenario

To describe a typical manual test management scenario with spreadsheets let’s start off with the artifacts involved.

To design an application or a service, some kind of (requirement) specification must be made. It is quite common for a specification to be written as a separate document or better yet, composed of some kind of requirements such as use cases, user stories or business requirements in a separate requirement management system.

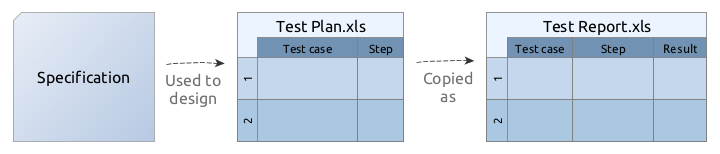

When the application is implemented there must be a way to verify that the implementation works in a way designed. The specification is used to design a test plan which typically consists of test cases and steps to execute during the testing. These steps are often described in a spreadsheet that makes up the test plan for the testing.

In addition, the results of the testing must be tracked and reported somehow. Typically the spreadsheet-based test plan is written in a way that it can be copied and filled out to form a test report of the testing.

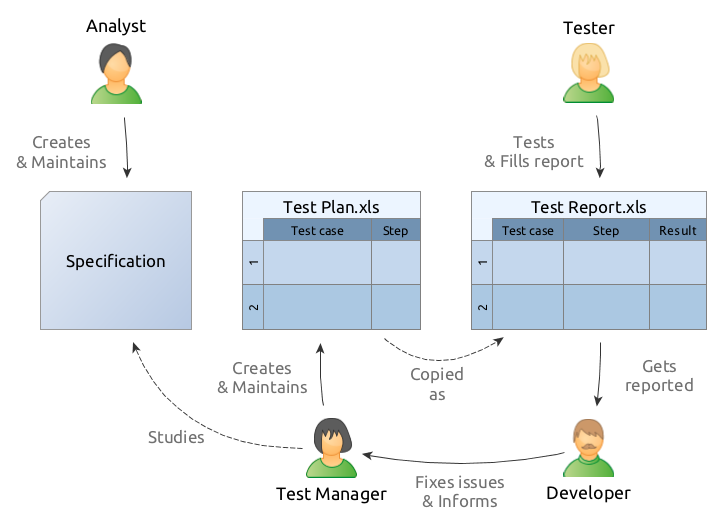

The above diagram describes a common process for working in a spreadsheet-based environment. Analysts designing the application are responsible for writing down the specification document. This document is then stored somewhere and studied by the persons responsible for testing.

A person responsible for managing the testing, Test Manager in the diagram above, then familiarizes him/herself of the specification and writes down how the application should be tested to verify that it works in a way the specification describes. A spreadsheet-based test plan is written, stored somewhere and delivered for the testers doing the actual testing.

When a tester is going through the steps and testing the application he/she copies and fills out the test report as a spreadsheet document. The results of the tests are filled up and comments about the defects encountered are written down.

During the testing round, it is quite common to encounter issues, such as defects. These should be fixed and the tester goes on and sends the test report to the developers responsible for fixing the issues. As the issues are resolved the developer informs the manager. Fixed issues should be re-tested so the process is cyclic in a way so that the test plan (and possibly the specification) is updated and new re-testing round is done.

There are variations to this of course. But typically, some kind of test plan is written as a spreadsheet, stored somewhere and used as a reporting template for interested parties.

-

Challenges

The scenario above poses some challenges which are often accentuated with the size of the project.

- The management requires a lot of dialog and direct communication between the parties. For example, if a tester should only be testing some part of the test plan communicating the ‘what should be tested’ to the tester is laborious.

- Keeping the different assets and documents in sync is tedious. If and when the specification changes it is often hard to know which test cases should be updated.

- Linking the different assets together is often hard or impossible. For example, it is often hard to know what actually should be re-tested for some defects found during the testing.

- Document management and versioning take a lot of work. How are you sure that all the parties involved are reading the same versions of the documents? In addition, it is quite common for the documents to go sour over time so having the trust that your test plan is up to date is difficult.

- Getting to know the latest status of the testing is tough with a large collection of testing reports and other documents.

- Managing the documents and assets is usually hard. When the documents are sent around and delivered to 3rd parties over time it is practically impossible to control anymore who has access to what.

- Handling the documents takes a lot of work. Typically, the more people you have working the more time you waste passing documents around and keeping them up to date.

-

Doing things better

To ease testing related work and work out the challenges mentioned above migrating your process to a centralized test management tool is preferred. Because all your testing related assets can be stored centrally it eases the effort related to passing documents around and keeping them up to date. It drives collaboration as these tools usually offer features for assignments, commenting and sharing content. With centralized data store reporting is a breeze with up to date views on your current testing and issue status.

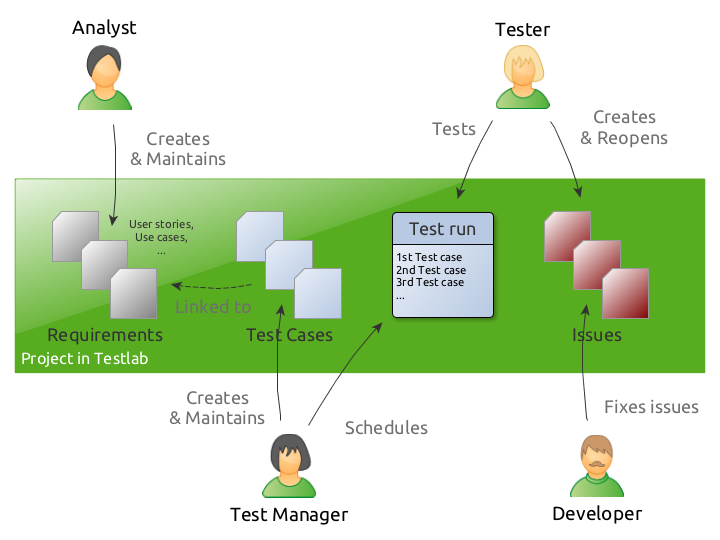

The above diagram shows a typical scenario when a similar testing process is handled with a centralized test management tool, Meliora Testlab. A project in Testlab includes all quality-related assets of a single entity under testing, such as a software project, a system or an application. It represents a clear separation between different projects in your company.

Analysts are responsible for compiling up the features, needs, and requirements for the application and creating the user stories, use cases, business requirements or some other types of assets that fit your software process. These requirements are verified by test cases which are added with execution steps. These test cases are then linked to the requirements they are verifying – to achieve full transparency from application features through test cases, test runs the test cases are executed during the testing ending up to the issues found in the testing.

-

Migrating spreadsheet-based data to Testlab

To migrate existing quality-related assets from spreadsheets to your Testlab project is straight-forward. The easiest way to start is to log into the “Demo” project in your instance and export the existing data to a file. You can do this by choosing the appropriate export function from the “Testlab > Export” menu.

Keep in mind, that importing the data requires that the file is in CSV format. If you have a CSV capable editor, the easiest way is to export the data in CSV format. This way the file should be guaranteed to be correctly formatted when importing it back.

You can also export the files in XLS format and edit them in an Excel-compatible editor, but then you have to be sure to export the CSV correctly before importing it back to your Testlab project.

A detailed description of the format supported and how to use the import features in Testlab is described here.

-

How do I import the data

To import data download the template files above for the assets you want to import. Make yourself familiar with the format from the links presented above and fill out the files to contain the data you want to import.

Alternatively, if you already have your data in spreadsheet format alter it properly so that it conforms to the format Testlab supports.

When you are happy with the data you have to export it in CSV (Comma Separated Values) format. Some guidance can be found in the following links for Excel and LibreOffice/Open Office.

Make sure you export the file in a format Testlab supports it: A safe choice is to export the file in UTF-8 or ISO-8859-1 character set, use a semi-colon (;) as a field separator and double quote (“) as a text delimiter character.

Log in to the project you would like to import the assets into. From Testlab / Import -menu, select the type of asset you would like to import and run the import tool for your CSV formatted file. You have an option to run the import in “Dry run” -mode first which just validates your import file for any errors or warnings. The process output shows you how the import is done and in the end, gives you a summary about encountered warnings or such. After you are happy with the result run the actual import again by unchecking the “Dry run” option.

Keep in mind, that for the assets to show up in your view you might have to refresh the hierarchy tree you’ve imported the assets into.

-

I only have test cases

You only have test cases described in a spreadsheet but no requirements defined? This poses no problem as a good strategy is to first import your test cases to Testlab and later on create the needed requirements for your project. You can bind the imported test cases to these requirements easily by dragging and dropping.

What is gained in this strategy is good visibility to your testing status later on. For example, let’s assume that you have a set of user interface related acceptance tests in your project but no related requirements described. You would just like to have visibility on how different views in your application’s user interface are tested. Then you might want to just create a matching requirement in your Testlab project for each application view and bind the appropriate test cases to these requirements (views). After this, the Coverage view of your project (and other requirement related reports) in Testlab always shows the testing status and issues matched to the views of your application. Voila!

-

Automated testing

If you’ve automated a part of your testing you might have quite many unit (or other automated) tests for which you would like to follow the status. When pushing automated test results to your Testlab project you should have a matching test case in your Testlab project for your tests. For this, using import is a handy way of creating your Testlab side test cases. If you just export a list of your unit tests to a text file it is quite trivial to form a suitable CSV file with the template described above which can be used to import the test case stubs to Testlab.

-

Advantages gained

There you have it. By moving your QA process from a highly manual labor-intensive spreadsheet-based process to a modern quality management solution you drive collaboration, save time and gain better insight on the quality, testing and issue status of your projects.