Automating testing has been an ongoing practice to gain benefits for your testing processes. Manual testing with pre-defined steps is still surprisingly common and especially during acceptance testing we still often put our trust in the good old tester. Unifying manual testing and automated testing in a transparent, easily managed and reported way is particularly important for organizations pursuing gains from testing automation.

All automated testing is not similar

The gains from testing automation are numerous: Automated testing saves time, it makes the tests easily repeatable and less error-prone, makes distributed testing possible and improves the coverage of the testing, to bring up few. It should be noted though that not all automated testing is the same. For example, modern testing harnesses and tools make it possible to automate and execute complex UI-based acceptance tests and at the same time, developers can implement low-level unit tests. From the reporting standpoint, it is essential to be able to combine the testing results from all kinds of tests to a manageable and easily approachable view with the correct level of details.

The gains from testing automation are numerous: Automated testing saves time, it makes the tests easily repeatable and less error-prone, makes distributed testing possible and improves the coverage of the testing, to bring up few. It should be noted though that not all automated testing is the same. For example, modern testing harnesses and tools make it possible to automate and execute complex UI-based acceptance tests and at the same time, developers can implement low-level unit tests. From the reporting standpoint, it is essential to be able to combine the testing results from all kinds of tests to a manageable and easily approachable view with the correct level of details.

I don’t know what our automated tests do and what they cover

It is often the case that testers in the organization waste time on manual testing of features that are already covered with a good set of automated tests. This is because the test managers don’t always know the details of the (often very technical) automated tests. The automated tests are not trusted on and the results from these tests are hard to combine to the overall status of the testing.

This problem is often complicated by the fact that many test management tools report the results of manual and automated tests separately. In the worst-case scenario, the test manager must know how the automated tests work to be able to make a judgment on the coverage of the testing.

Scoping the automated tests in your test plan

Because the nature of automated tests varies, it is important that the test management tool offers an easy way to scope and map the results of your automated tests to your test plan. It is not often preferred to report the status of each and every test case (especially in the case of low-level unit tests) because it makes it harder to get the overall picture of your testing status. It is important to pay attention to the results of these tests though so that failures in these tests get reported.

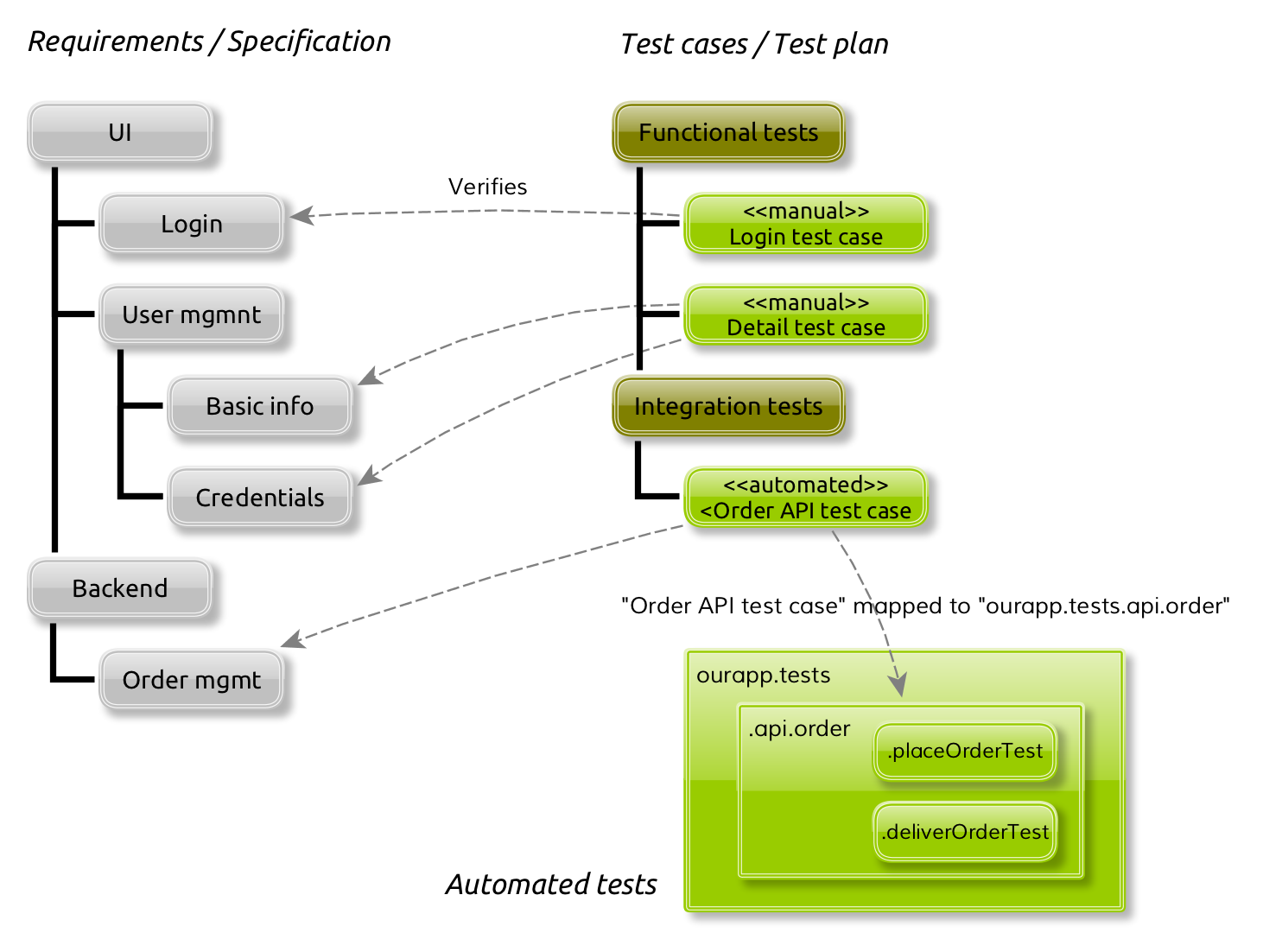

Let’s take an example of how the automated tests are mapped in Meliora Testlab.

In the example above is a simple hierarchy of functions (requirements) which are verified by test cases in the test plan:

- UI / Login -function is verified by a manual test case “Login test case“,

- UI / User mgmnt / Basic info and UI / User mgmnt / Credentials -function is verified by a functional manual test case “Detail test case” and

- Backend / Order mgmt -functions are verified by automated tests mapped to a test case “Order API test case” in the test plan.

Mapping is done by simply specifying the package identifier of the automated tests to a test case. When testing, the results of tests are always recorded to test cases:

- The login view and user management view of the application are tested manually by the testers and the results of these tests get recorded to test cases “Login test case” and “Detail test case“.

- The order management is tested automatically with results from automated tests “ourapp.tests.api.order.placeOrderTest” and “ourapp.tests.api.order.deliverOrderTest“. These automated tests are mapped to test case “Order API test case” via automated test package “ourapp.tests.api.order“.

The final result for the test case in step 2 is derived from the results of all automated tests under the package “ourapp.tests.api.order“. If one or more tests in this package fail, the test case will be marked as failed. If all tests pass, the test case is also marked as passed.

As automated tests are mapped via the package hierarchy of the automated tests, it makes it easy to fine-tune the detail level you wish to scope your automated tests to your test plan. In the above example, if it is deemed necessary to always report out the detailed results on order delivery related tests, the “ourapp.tests.api.order.deliverOrderTest” automated test can be mapped to a test case in the test plan.

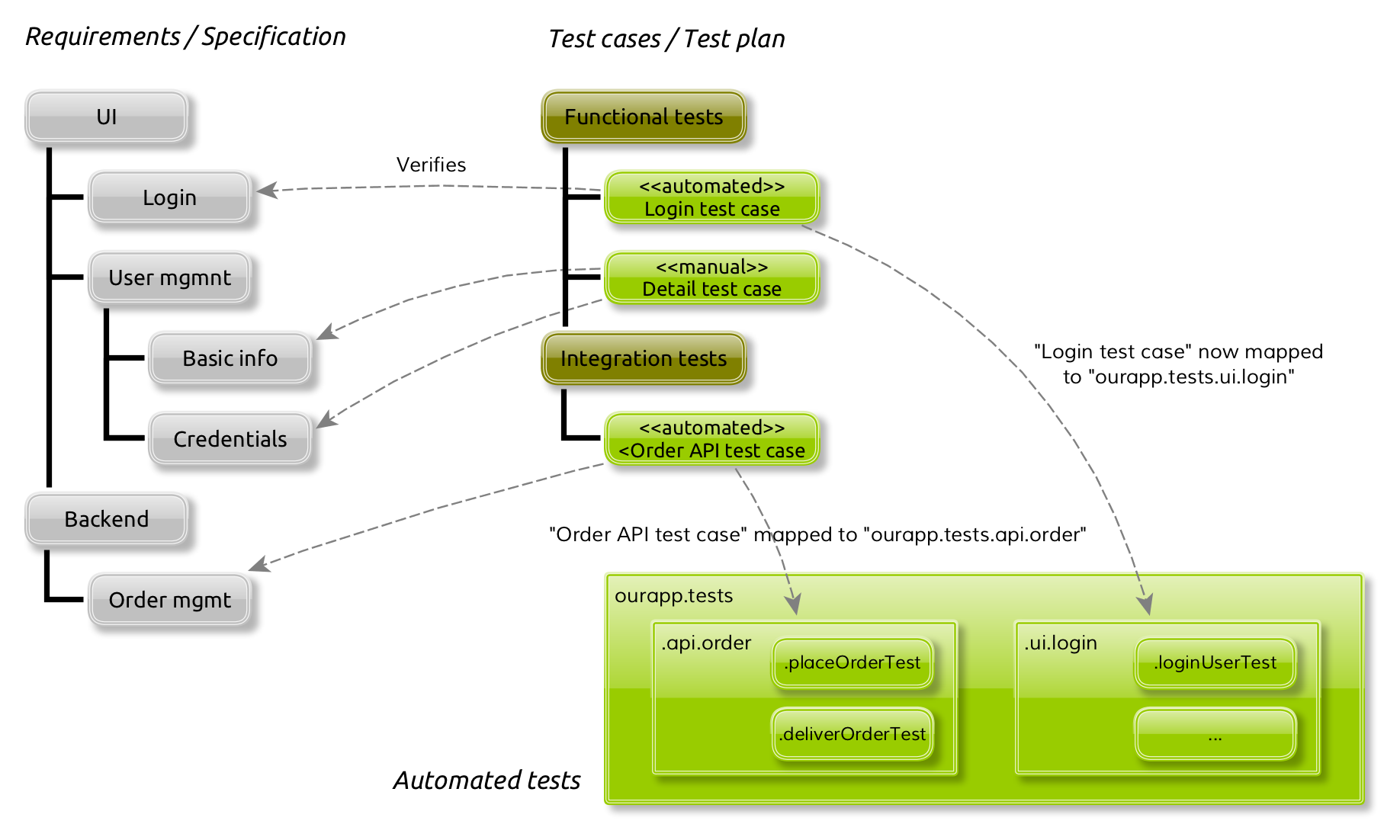

Automating existing manual tests

As testing automation has clear benefits to your testing process, it is preferred for the testing process and the used tools to manage it to support easy automation of existing manual tests. From the test management tool standpoint, it is not relevant which technique is used to actually automate the test, but instead, it is important that the reporting and coverage analysis stays the same and the results of these automated tests are easily pushed to the tool.

To continue on with the example above, let’s presume that login related manual tests (“Login test case“) are automated by using Selenium:

The test designers record and create the automated UI tests for the login view to a package “ourapp.tests.ui.login“. Now, the manual test case “Login test case” can be easily mapped to these tests with the identifier “ourapp.tests.ui.login“. The test cases themselves, requirements or the structure of these do not need any changes. When the Selenium-based tests are run, later on, the result of these tests determine the result for the test case “Login test case“. The reporting of the testing status stays the same, the structure of the test plan is the same, and related reports are easily approached by the people formerly familiar with them.

Summary

Testing automation and manual testing are most often best used in a combination. It is important that the tools used for test management support the reporting of this kind of testing in as flexible way as possible.

(Icons used in illustrations by Thijs & Vecteezy / Iconfinder)